Games have long been a part of childhood learning. When playing, we learn through trial and error how to get better at something. In hide-and-seek, a toddler will often “hide” somewhere obvious when they first play, but as they learn, they pick better hiding places. With the help of NCSA’s DeltaAI, a powerful GPU-based supercomputer designed for advanced AI research, researchers from the University of Pennsylvania (UPenn) took this basic concept, learning through “play,” and used it to train tiny, palm-sized autonomous flying robots to race to a finish line against each other.

Incentives for Efficient AI Training

Antonio Loquercio, assistant professor of electrical and systems engineering at UPenn, and his team recently had their paper accepted for presentation at the prestigious IEEE International Conference on Robotics & Automation (ICRA). He and his student and fellow author on the paper, Vineet Pasumarti, explained how their research uses the mechanics of play to help train AI.

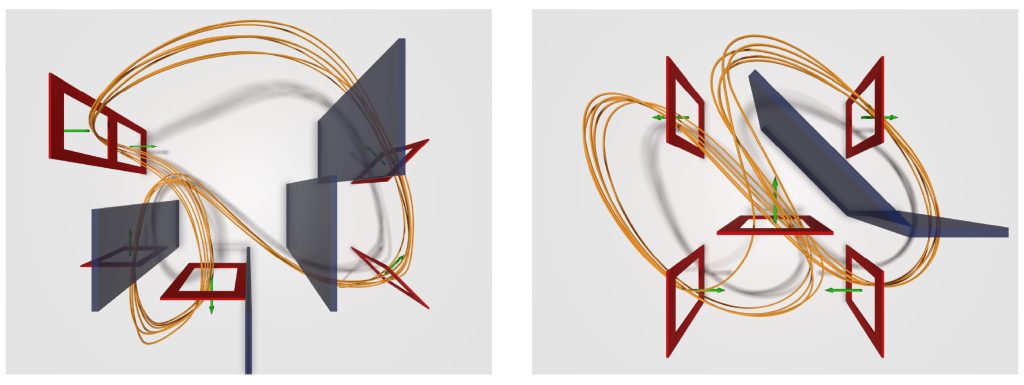

“We study a branch of machine learning called reinforcement learning,” said Loquercio. “In particular, we are interested in leveraging multi-agent reinforcement learning algorithms that allow for competitive and cooperative tactics to emerge. In this project, two autonomous racing drones compete with each other to accumulate rewards during training, which takes place in a high-fidelity simulation software, and these rewards facilitate the learning of behaviors that lead to good outcomes in the real world, i.e., the winning of races.”

Instead of giving the AI agents step-by-step instructions on how to complete the course and win, Loquercio and his team let them compete against each other to learn the best strategy. However, there was a twist in how the race was set up. Instead of rewarding the AI agents when they stayed on a certain path or followed a specific racing line at a specific speed, Loquercio’s team rewarded the AI when the drone it was piloting passed a gate or crossed the finish line before the opponent.

“We found that sparsifying the rewards (drone is rewarded in full only for reaching the next gate along the race track, rather than rewarded incrementally for minimizing distance to the next gate) as well as conditioning the reward on beating the opponent, allows for tactical, opponent-aware maneuvers to emerge,” said Loquercio.

Something fascinating began to happen when the AI-piloted drones were left to their own devices to plot the optimal path through the race course – they developed “human-like” strategies to win, including maneuvers familiar to anyone who has seen professionals race cars in real life.

“These maneuvers include smooth overtaking and defensive blocking to push the opponent towards subpar racelines,” said Loquercio. “The drones learned to overtake, block, and modulate risk. When approaching a slower opponent, the drone often passes the opponent without collision. Additionally, the drones learned to block/defend a raceline. For instance, our paper highlights a case in which we deployed our opponent-aware drones in a head-to-head race. In that example, the leading drone defended its marginal forward lead by flying a wide trajectory as it entered a gate, forcing the opponent to the outside and causing a collision with the gate frame. Lastly, our drones learned to fly slower and safer trajectories after observing the crashed opponent, as winning the race becomes guaranteed.”

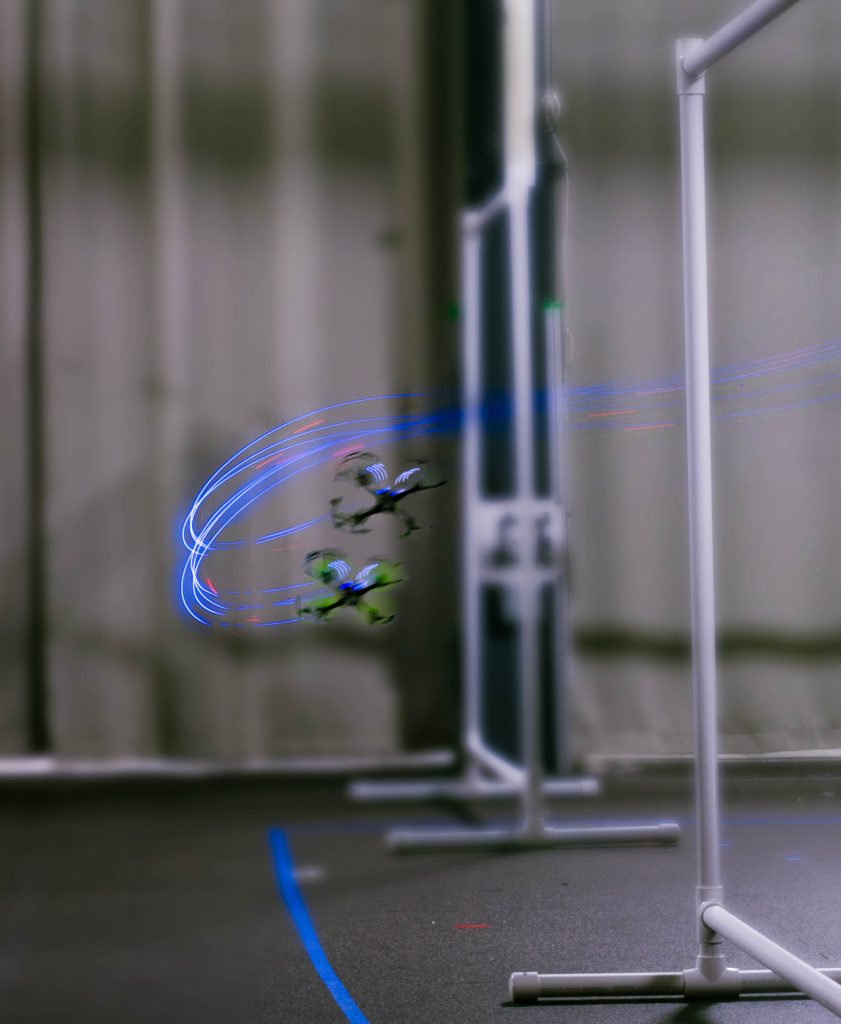

While all this work was simulated using high-performance computing resources like DeltaAI, moving the simulation to the real world is often the goal. In this case, Loquercio’s team found their methodology offered another surprising outcome. “Even more interestingly, we also found that our method allows for drone racing policies to transfer from simulation to reality (broadly referred to as the “sim-to-real gap”) far more robustly than existing methods, and allows for successful obstacle avoidance to occur.”

When Training Through Play Makes Sense

While this research shows that there is something to be gained from training AI in this manner, not every training situation benefits from gamifying the desired outcome. As Loquercio explains, training an AI to win a race is an ideal scenario for this kind of agentic learning.

“The objective of a robot is to successfully complete a task by autonomously taking actions in the environment,” he said. “When a task is well-defined, e.g., placing a pan on the stove, the optimal behavior is straightforward. However, there are many tasks where the optimal behavior is less predictable, especially when the optimal behavior is contingent on uncontrollable variables. In motorsport, for example, a racecar driver’s behavior is dependent on the behavior of the surrounding vehicles. An expert driver knows when to concede positions, defend a racing line, and overtake for the best chance at winning the race. We aim to unlock these same opponent-aware capabilities in autonomous drone racing. By forgoing strict prescription of the robot’s behaviors, and allowing the robot to discover solutions through trial-and-error across thousands of parallel simulations, we encourage game-theoretic solutions to emerge from the training process.”

Watching these micro-flyers race isn’t the end goal of this research – it’s about helping AI learn to navigate worlds where other independent elements are involved. Winning is just the goal of this particular research. Competitive training could lead to robots that protect, rather than just compete.

Take, for example, the global decline of the honeybee. In nature, bees face constant threats from invasive predators like the “murder wasp.” While a standard micro drone might struggle to keep up with the erratic movements of a wasp, a micro-flyer trained through this play-based competition could be different.

By learning the complex “game” of intercepting an opponent, these palm-sized protectors could be trained to identify a wasp’s approach and gently steer it away from a hive. Because the AI has practiced against thousands of different simulated opponents, it wouldn’t need a human to tell it how to react; it would have already discovered the most efficient way to guard the hive through millions of rounds of practice in the safety of a supercomputer.

“The biggest reward from this direction is the potential for robots to successfully handle complex competitor dynamics,” said Loquercio.

From Simulation to Real World

Simulations on machines like DeltaAI help researchers get answers quickly. In this case, allowing two AI agents to run and learn from each other would have led to many failures in each race. A simulated race is completed in a fraction of the time it would take to set each race up in the real world.

“Leveraging high-performance computing (HPC) is critical to the success of this work,” said Loquercio. “Our multi-agent reinforcement learning approach relies on 10240 parallel environments for agents to learn good behaviors through trial-and-error, especially when we forgo dense, behavior-prescribing rewards.”

Loquercio’s team secured time on DeltaAI through the U.S. National Science Foundation’s ACCESS program. “ACCESS resources allowed us to train drones across more than ten thousand parallel environments,” he said.

This is a very compute-heavy project. Without ACCESS, it would have taken much longer to get these results.

University of Pennsylvania

While important, the simulations are still just the starting point. Eventually, the goal is to see whether the AI agents can race with the same success using real-world micro-flyers. Loquercio’s team found that their work translated remarkably well to reality.

“One of the most important findings from our paper was that sparse and competitive rewards led to much stronger simulation-to-reality transfer,” he explained. “Our method achieved a considerably smaller gap between average flight speed in simulation and in the real world compared to the baseline. Our real-world collision rates were also significantly lower than the baseline. Empirically, competitive pressure amongst reinforcement learning agents appears to produce more robust performance in the real world as the sim-to-real gap becomes better traversed. This discovery is very exciting for us and has potential for profound research impact. We are continuing to investigate why this phenomenon occurs.”

You can find more reading about this research in the following articles:

A learning-based quadcopter controller with extreme adaptation in the journal IEEE Transactions on Robotics.

Champion-level drone racing using deep reinforcement learning in the journal Nature.Learning high-speed flight in the wild in the journal Science Robotics.

ABOUT DELTA AND DELTAAI

NCSA’s Delta and DeltaAI are part of the national cyberinfrastructure ecosystem through the U.S. National Science FoundationACCESS program. Delta (OAC 2005572) is a powerful computing and data-analysis resource combining next-generation processor architectures and NVIDIA graphics processors with forward-looking user interfaces and file systems. The Delta project partners with the Science Gateways Community Institute to empower broad communities of researchers to easily access Delta and with the University of Illinois Division of Disability Resources & Educational Services and the School of Information Sciences to explore and reduce barriers to access. DeltaAI (OAC 2320345) maximizes the output of artificial intelligence and machine learning (AI/ML) research. Tripling NCSA’s AI-focused computing capacity and greatly expanding the capacity available within ACCESS, DeltaAI enables researchers to address the world’s most challenging problems by accelerating complex AI/ML and high-performance computing applications running terabytes of data. Additional funding for DeltaAI comes from the State of Illinois.