NCSA has been a sponsor of Pygmalion for many years now, finding interesting and exciting ways to get involved with the local community during this annual cultural festival. It’s helped show how NCSA is more than just supercomputers, and this year was no exception. NCSA’s Advanced Visualization Lab partnered with musician Arushi Jain, also known as Modular Princess, to create a dazzling blend of sight and sound.

The evening’s event was planned relatively quickly, with the AVL team meeting with Jain over Zoom to discuss how the team would create a visual landscape to match Jain’s soundscape. Jeff Carpenter, AVL’s research visual designer, explained how the show came together.

“[Jain] was going to be on tour and traveling out of the country,” Carpenter said. “We decided to keep things simple and use her voice and music as two distinct audio channels to drive the visualizations instead of trying to grab data generated from her modular synth. That way, we could work with the recordings in her absence to play around and test our ideas – making final adjustments during the sound check just before showtime.”

Jain’s website says her work “focuses on extending Indian Classical music beyond the scope of acoustic instrumentation by utilizing voltage-based musical interfaces.” Jain provided the AVL team with a set list and lighting cues. From here, the team was able to choose visualizations that matched the moods and colors that would be washing the stage in sound and light. “We began to arrange our visualizations with the corresponding color palates and song timings,” Carpenter explained. “We knew that longer songs would need a few options to keep things visually interesting.”

From here, they created a playlist of their own, choosing popular visualizations AVL had created in the past and creating some funky new visuals to match the music. “We used Touch Designer to develop audio reactive visualizations,” Carpenter said, “interactive tools to modify color and sound levels on the fly, and to act like a video switcher to change between scenes.” AVL even flew one of the remote members of their team out from Delaware to complete the work. “AVL’s most recent hire, Brad Thompson, had worked with Touch Designer for the interactive pre-show for this year’s SIGGRAPH Electronic Theater that Kalina [Borkiewicz] was conference chair for. His experience with this software was invaluable, and we wouldn’t have been as successful without him. ”

If the audience noticed the visuals changing and moving along with the music and colors, even as Jain paused, they weren’t imagining it. Despite meeting everyone and creating a cue sheet for visuals, there was still an element of spontaneity in the concert. “Even with this plan,” Carpenter said, “We did call a few audibles during the live performance when song timings or lighting cues changed.”

Some of the visuals included familiar sights, like the photosynthesis visualization that blanketed the stage in greens and oranges, making the music feel hopeful and renewing, reminiscent of spring and plants coming back into the sun. However, others were created specifically for the program. “I created a kaleidoscope visualization by modeling a tube primitive in Touch Designer,” said Carpenter. Thompson turned data from two astronomical simulations, an evolving galaxy and a black-hole-shredded star, into shimmering sheets and clouds of glowing particles; Senior Visualization Programmer Mark Van Moer built effects that looked like raindrops or flowers or wiggling curves; and Senior Research Programmer Stuart Levy created ball-like sea-creatures, all responsive to Jain’s synthesizer and voice.

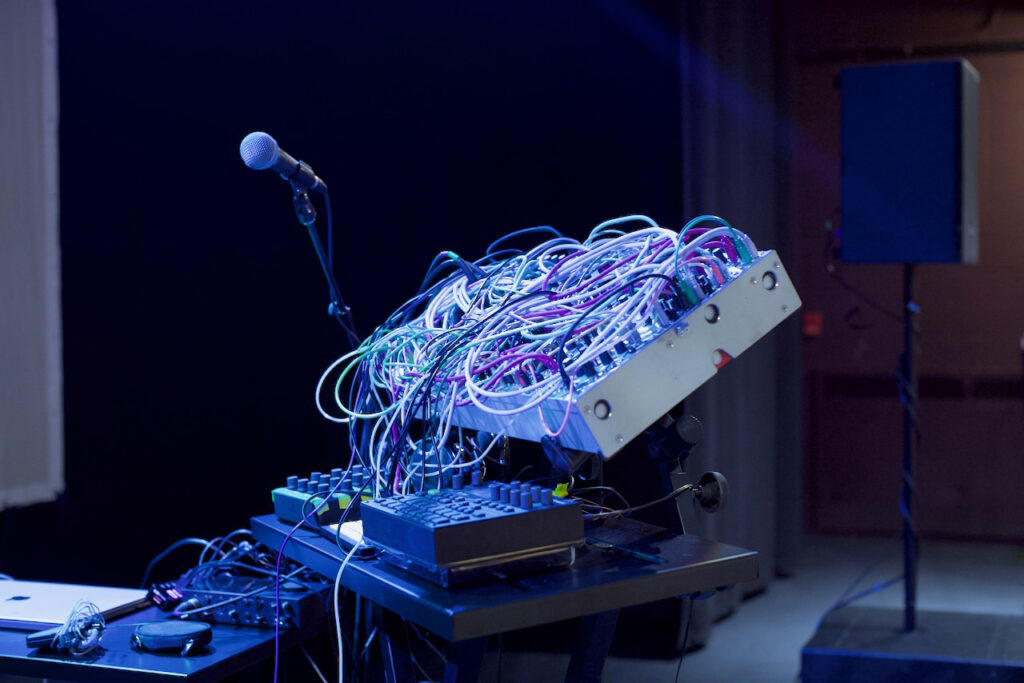

The team further adjusted the visuals by using a program called Synesthesia, an interactive music visualizer application, which allowed the team to apply pre-made effects and filters to their visualizations, giving them a new look. The resulting complex dance between sight and sound was an electrifying spectacle. The audience was entranced by Jain’s thrumming, emotional songs, layered with sounds and voice, creating a rich tapestry of movement, both sonically and visually. Arushi Jain performs with a huge soundboard, mixing and blending sounds and songs she sings. For many audience members, it was a new type of music to witness. “I really enjoyed the concert, even though it’s a genre of music I’m less familiar with,” said Kelli Trei, a biology librarian from the ACES library on campus.

Trei described the style of artistry as intriguing. “Watching her perform with the modules and listening to her mix the sounds was interesting. I also really enjoyed the visual display.” Trei said the visual experience impacted the way she heard the music. “I felt like the music sounded different to me depending on what was happening on the screen.” She described this effect as being more pronounced the longer she watched, with the visuals impacting how she interpreted the music.

Rini Mehta, a professor of literature and world religion and an NCSA affiliate with a specialty in Natural Language Processing (NLP), was inspired by the event to think up ways a team like AVL could improve accessibility. “The visualizations were mind-blowing,” Mehta said. “I, in particular, would be interested in experimenting with songs (with deep meanings, such as Tagore’s corpus or Bach’s Cantatas) and see if Visualization can come up with a visual code/translation of the words and melody. Such experimentation has far-reaching applications. For instance, if someone is hearing-disabled and can only see/read a song (as a poem) would the visualization be able to translate the melody to such a person? I think it is worth a try!”

“It was entrancing,” said Jake Metz. Metz has a lot of experience with audio-visual arts as UIUC’s Media Commons support specialist and as an AV producer. “I’ve listened to a lot of this type of music, trance-y modular, synth stuff. I’ve listened to hours and hours and hours of this kind of music. I often listen to it for meditation, so it puts me in that mood. It was very overwhelming.”

“It’s really interesting to have such pure synthesis tones,” Metz said. “You get these intersecting harmonic structures that shift and phase over time. And then the sequences of melodies phases as well, so the air is pulsing around you in the audience.”

Living legend Donna Cox, retired AVL director, also took in the show. Her old team was in fine form, she said. “I thought it was a beautiful mix of graphics, scientific visualization and mesmerizing music!” She was excited to see her team involved in a program like this. “They did a wonderful job. I loved it.”

After the final bow and the stage was struck, Jain met again with the AVL team to review the performance. That’s when the AVL team learned that Jain was so deeply involved with her performance that she didn’t get to see the visualizations herself.

“She invited the visualization team to talk with her afterward,” Stuart Levy, senior research programmer, said. “She was very gracious with us and was delighted to see a few samples of what we had contributed. She was fascinated and had a bunch of questions, from ‘What’s happening in this visualization?’ to ‘Are you programmers?’ and ‘How did you make these?’ We had only limited time to talk, but I hope we’ll have a chance for future conversations, and maybe even for future collaboration.”